How to Start Learning About AI and Machine Learning in 2026 Without Getting Overwhelmed

If you have ever tried reading about AI or ML, you probably felt very confused by all the information and hype about these topics; with all of the recent headlines about generative AI and foundation models, it can seem like such massive topics (AI & ML) that they seem unreachable to you. AI and ML Made Simple for 2026 is designed to help beginners navigate this overwhelming landscape. This means when people begin learning this type of technology they may think, “How can I ever learn to use this?” And that leads to despair and eventually quitting before they ever start.

The best way to begin learning about AI & ML is to take small steps that lead to practical real-world solutions rather than continuously searching for new ML models; there are too many today (over 300) and it would take too long (several years) to learn how to use all of them! AI and ML Made Simple for 2026 lays out a clear path for beginners that builds confidence and provides the knowledge necessary for success without burning out on learning.

Getting Started — Explanations to Help You Better Understand AI / ML

A technology (or group of related technologies) may be considered ‘artificial intelligence’ if the technology is capable of simulating human-like reasoning, learning from experience and being able to make decisions. Machine learning is a subset of AI—a set of tools and methods that help machines improve with data, without needing explicit instructions for every single task.

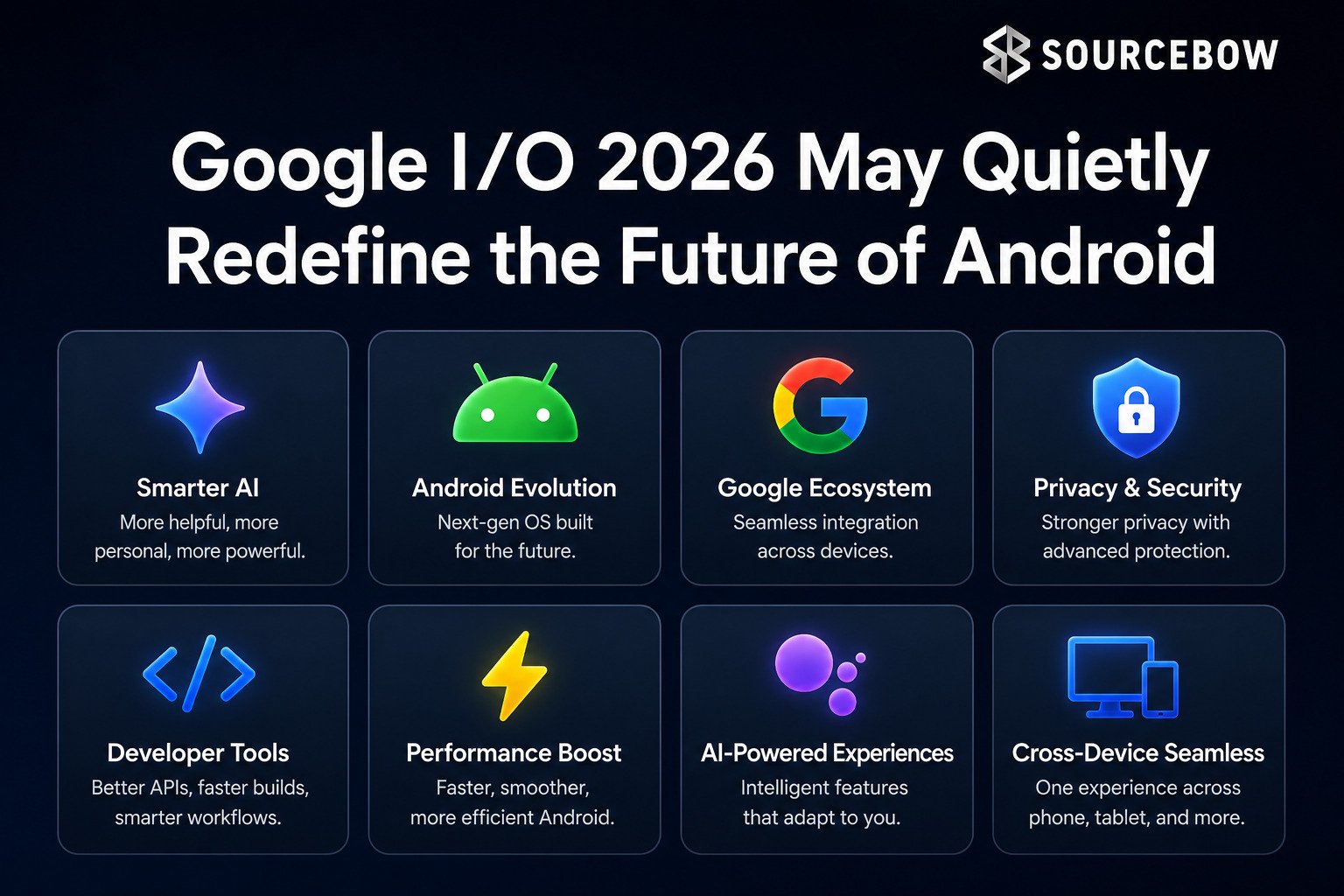

In 2026, the landscape has grown a lot. Generative AI, foundation models, and decision-making systems are everywhere. It’s no longer just about clever algorithms. How big models, massive datasets, and cloud services work together is what really powers applications today. People are also talking about multi-modal AI, which can understand text, images, and audio all at once. Fine-tuning models and running AI on devices rather than just servers are becoming standard practices.

There are three flavors of learning to keep in mind. Supervised learning is when the machine gets labeled data, like predicting house prices from features. Unsupervised Learning is the search for patterns found in the data itself, such as by grouping customers according to how they behave (such as by clustering). Reinforcement learning is a trial-and-error method of learning like a human does, often with a reward system—training a robot or AI agent to play games is an example of this type of learning.

Aside from algorithms, the growing importance of ethics, explainability, and low-code tools will be an essential part of developing projects responsibly and practically if you recognize their potential for earlier involvement in your work.

A Good Math Foundation Helps You Learn AI

To a lot of people, math can seem intimidating in AI; however, it doesn’t have to be that way. There isn’t a lot of memorizing of formulas; it is more about understanding how models learn and how to reason with data.

You will need to know linear algebra to represent data as vectors and matrices; this is important for building neural networks. You will need to recognize that calculus is how models learn to be better via optimization methods such as gradient descent. You will also use probability and statistics to make predictions, to show distributions of data, and to quantify uncertainty. You will use discrete math constructs such as graphs and sets to build algorithms. Finally, you will need to use optimization concepts to make sure that your models have enough computational resources and optimal performance without wasting unlimited amounts of computational resources.

Great resources are always available now in 2026, like Khan Academy and MIT Open Course Ware and textbooks like Mathematics for Machine Learning. Learning by mixing coding with practice is a great way to make abstract ideas real.

Programming Transforms Theory into Practice

Programming transforms theoretical concepts into practical applications – and since Python continues to be the language of choice due to its simplicity of use, expressiveness, and vast array of libraries that allow you to do every step of your AI development, it makes sense to start with Python.

The key libraries and tools to use as you start to get comfortable include:

- NumPy and Pandas for data manipulation

- Matplotlib, Seaborn, Plotly for data visualization

- Scikit-learn for traditional machine learning

- TensorFlow, PyTorch, JAX for deep learning

While other languages like R can be useful for statistical reasoning, and C++ and Java may offer performance advantages, Git (version control software) and notebooks such as Jupyter or Google Colab will allow you to experiment easily. Although cloud services (AWS, GCP, Azure, etc.) are gaining importance, local projects are an acceptable

approach to beginning your work – especially given how popular Python is.

The ease of use and the ability to move through from data wrangling to the deployment of models with minimal boilerplate code have made Python the go-to choice for researchers and engineers in developing AI. The plethora of open-source libraries available for Python makes it easy to build prototypes, share your results, and iterate quickly, thanks to a vibrant community that is behind those libraries.

How to Handle Your Data

“Data” is what powers AI; therefore, any intelligent model will fail if the data being used to train the model is not in an optimum format. To effectively create an AI pipeline, data must be collected, cleaned, transformed, and examined.

- Collecting Data: Start with reputable datasets from sources like Kaggle, UCI Machine Learning Repository, or company-provided data.

- Data Cleaning: Fill in missing information, eliminate duplicates, and fix obvious mistakes.

- Data Transformation: Normalize continuous data, convert categorical data to numerical format, and develop unique features for models.

- Data Exploration: Visualizations (through charts) and metrics (like statistics) can help highlight patterns, trends, and anomalies that might otherwise go unnoticed.

As of 2026, data exists in many forms – including tables, text, images, audio, and video – while platforms such as Spark or Hadoop can handle huge datasets, beginning with small local datasets is perfectly acceptable.

Hands-On Experience Without Pressure

Reading about AI is a good first step; however, gaining true confidence comes through practical experience. You do not need to create your own massive projects; they will get larger as your abilities get stronger.

Some examples of beginner-level projects include predicting the price of homes, analyzing social media sentiment, or classifying the digits from the MNIST dataset based upon their image appearance.

Some examples of intermediate-level projects include creating chatbots, building recommendation engines, or constructing a basic stock price trend application.

Some examples of advanced-level projects include producing generative artwork, utilizing multi-modal forms of AI, and implementing reinforcement learning within games/robotics.

Sharing each of these projects on platforms such as GitHub or as part of your own personal web portfolio is significant! It shows capability to future employers or clients, and it tracks progress over time.

Staying Updated and Understanding Deployment

AI evolves fast, and 2026 is no exception. Some trends worth keeping an eye on:

- Foundation Models and Generative AI: Fine-tuning and practical applications are more accessible than ever.

- AI in the Cloud: Tools for AutoML, NLP, and multi-modal AI simplify scaling and experimentation.

- Edge AI: Running AI on devices like phones or IoT gadgets reduces latency and protects privacy.

- Ethics and Explainability: Understanding model decisions, fairness, and bias is non-negotiable.

- Low-Code Tools: Useful for quick prototyping, but deep understanding is still essential for serious projects.

Deployment skills matter too. Knowing how to serve models via APIs, containerize with Docker, manage orchestration with Kubernetes, and monitor models with MLOps adds real-world value. Start small: prototype locally, then explore cloud endpoints, then orchestrate for larger scale.

Tapping Into the AI Community

Learning alone can be isolating. Communities offer feedback, support, and motivation.

- Online Spaces: Reddit, Stack Overflow, LinkedIn groups, Discord servers.

- Competitions: Kaggle is excellent for practice and recognition.

- Meetups and Hackathons: Offer hands-on experiences and networking opportunities.

- Open Source Projects: Contributing to PyTorch or Hugging Face provides hands-on learning.

Although experiencing imposter syndrome is normal, gaining confidence can come from simply showing up week after week and building small projects. Connection with people in your community can help provide perspective, accountability, and sometimes even unexpected opportunities during your journey.

A Roadmap to a Year of Learning

Having a structured plan in place can help to turn what may have seemed like an overwhelming journey into measured growth. This is a learning path that is beginner-friendly:

- Months 1-2: Learn the basics of Python, basic mathematics, and how to handle data in Python.

- Months 3-4: Learn classic ML algorithms as well as the difference between supervised and unsupervised learning.

- Months 5-6: Delve into Deep Learning and Neural Networks.

- Months 7-8: Build small projects and participate in project competitions.

- Months 9-10: Study transformers, NLP, and foundation models.

- Months 11-12: Focus on how to deploy models, MLOps, and how to deploy ethically.

- After 12 months: Continue to experiment, read, and connect with your community!

Having even a basic roadmap makes it much easier to visualize the journey ahead of you. The goal should be continued forward momentum instead of trying to achieve everything at once.

The Real Magic Happens When You Deploy an AI Model

Getting a model to production is where the magic begins to happen – It can be thought of as going from experimentation to providing people with something that will actually work!

- APIs: Serve your model using the Flask, FastAPI, or Django framework.

- Containers: Package your model code & all dependencies together into a Docker image.

- Orchestration: Use Kubernetes for large-scale deployments.

- Monitoring: Track performance and decide when retraining is needed.

- MLOps: Keep versioning, collaboration, and reproducibility under control.