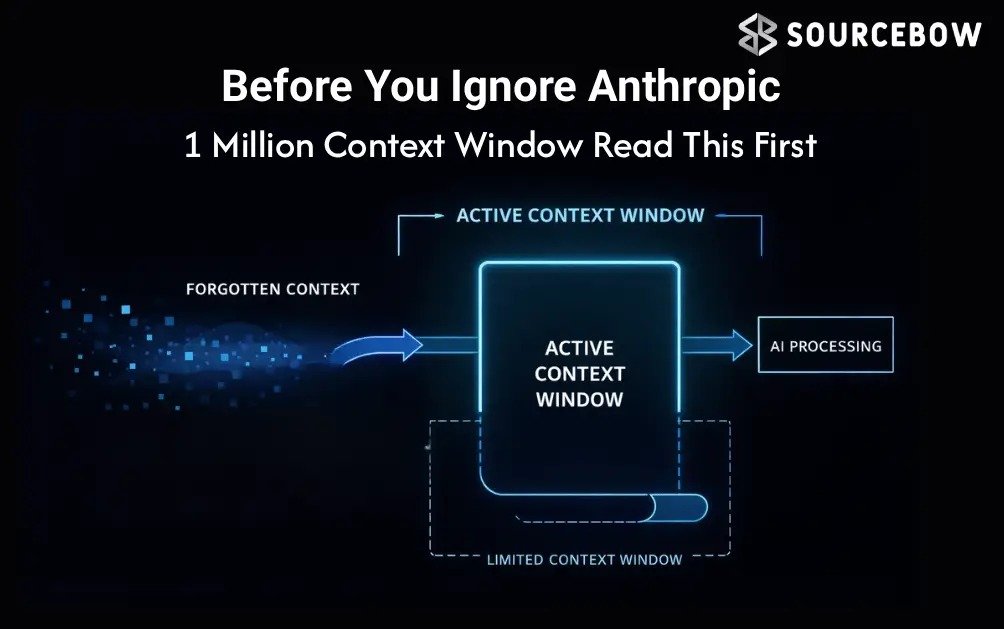

Long context has been a talking point for a while in AI. It promises vast memory, but a big number on paper only matters if you can actually use it. **Anthropic 1 Million Context** changes the math in a way that might actually make developers and product teams lean in. They’re not just giving you a bigger bucket; they’re rethinking how you fill it, how you pay for it, and how you pull useful facts out when you need them most. In other words, this isn’t spin about a bigger window, it’s about turning that window into a real tool you can design around.

In practical terms, **Anthropic 1 Million Context** has rolled out a 1 Million context window for Opus 4.6 and Sonnet 4.6 with two big shifts, a flat pricing approach that makes large prompts cheaper to scale, and a focus on retrieval accuracy that means the model actually finds what you hid inside that massive pile of text. Those two pieces together are what makes this release different from previous long context pushes. It’s not just a headline trick; it’s a real step toward usable long context workflows. Let me lay out what changes, why it matters, and how you might actually use it in the wild.

To understand why this is worth a closer look, you’ll want to keep a few ideas in view. Context size alone isn’t useful if the system can’t retrieve the right facts. Pricing can quickly derail a project if you’re always paying for more tokens than you actually use. And real world usage often means messy, multi document inputs where multiple facts live in different spots. **Anthropic 1 Million Context** is trying to address all three at once. The result isn’t a perfect panacea, but it’s a meaningful nudge toward making long context AI practical for real apps, especially where memory across chats, documents, and tool calls matters most.

Highlights

- Flat pricing for long context reduces bill shock as the window grows.

- Retrieval performance stays strong in larger windows, not just a pretty headline.

- Broader availability across Azure AI Foundry and Vertex AI expands adoption.

- Less aggressive context compression can improve stability in long-running agent workflows.

The core shift you should notice

Let’s start with the headline that actually changes how you plan tooling. Anthropic’s 1 Million context window isn’t just about cramming more text into a model; it’s about making that room genuinely usable. In practice, you’ll notice two things that alter how you design systems: the pricing and the retrieval quality. The pricing move is what you’ll feel first in your budget sheets. The retrieval quality is what you’ll notice in your results.

On the pricing front, Anthropic is using a flat pricing approach for long-context usage. That means the cost of sending 900,000 tokens isn’t dramatically higher than sending 9,000 tokens within the same framework. In other words, the long-context bill doesn’t explode with the size of the prompt. This reshapes the calculus for teams

building document-heavy apps, large research pipelines, or agent workflows that keep memory across rounds. It’s still not universally cheaper for every job, but it flips the usual curve in a very tangible way once you get past the first couple hundred thousand tokens.

That change matters because it makes it more practical to bring entire document sets into the prompt in a single pass. A rough example that got shared around the release hints at the jump from about 100 PDF pages to nearly 600 image-based PDF pages. If true, that’s a big leap for capabilities like in-app search, cross-document reasoning, and multi-document maintenance in a single agent session. If you’re building a productivity tool that needs to reason over dozens of reports or legal briefs at once, that could translate into real time saved and a smoother user experience.

Retrieval is the real story behind the number

Here’s the thing that often gets glossed over when a company flaunts a 1 Million context window: without solid retrieval, the extra space is wasted. You’ve probably seen this problem in action before — a model happily accepts a long prompt but can only retrieve one or two key facts buried in the middle. The rest becomes noise. The old adage about “the loss in the middle” in long-context models isn’t just a quirk; it’s a real bottleneck that undermines the usefulness of a giant window.

Anthropic’s newer Opus and Sonnet models claim state-of-the-art retrieval performance at around 256,000 tokens, hitting roughly 90 percent retrieval accuracy in a robust eight-needle benchmark. The eight-needle test might sound abstract, but it’s a much closer proxy to real-world tasks: you’re not just asking for one fact, you’re asking for multiple items scattered across notes, documents, and prior chats. The benchmark matters because it mirrors how agents actually work in production — juggling several details at once, across a sprawling context.

What matters most is how the performance scales as the window grows. In some systems, the moment you push toward a million tokens, the ability to retrieve accurately tanks. In the demonstration materials around this release, Gemini drops noticeably as the window expands; GPT-like models show a similar trend. Anthropic, by contrast, preserves more usable performance at larger scales. The trade-off isn’t zero loss, but it’s a considerably gentler decline. For developers who care about reliability over long sessions, that’s a meaningful improvement.

That shift changes the qualitative feel of long-context AI. It’s not that the 1 Million window is a magical, always-perfect memory. It’s that the room is now big enough and the retrieval is good enough to matter — enough to design features that rely on context across many steps, across documents, and across back-and-forth with tools and memories.

What this means for developers and real-world apps

For developers, the practical upshot is what you’d hope for: fewer moments when you feel stymied by the memory limits of your AI. You can push larger chunks of text in one go, you can keep more history in play, and you can do more with multi-step workflows without constantly sacrificing earlier context. The corollary is less context compaction — the dreaded process of trimming earlier information to stay under a token cap. Less compaction means fewer chances for an agent to forget a critical detail mid-project, which translates to fewer thread breaks and more coherent long-running tasks.

In real-world agent scenarios, where you might have a running conversation as you fetch data from a knowledge base, call external tools, or perform multi-hop reasoning, that extra room starts to feel tangible. Teams can design memory layers that actually reference past events, documents, or tool outputs without an expensive rewrite to squeeze everything back in. Early reports from practitioners suggest about 15 percent fewer context compactions in some workflows, and that isn’t nothing. That kind of improvement reduces both latency and error drift in multi-turn conversations or long research sessions.

Another practical implication is the broader platform reach. Anthropic’s features aren’t locked to a single API. They’re accessible via Microsoft Azure AI Foundry and Google Vertex AI, widening the circle of potential adopters. If you’re already operating within those ecosystems, this release fits in more naturally than a lot of other recent AI capability jumps. It becomes a matter of choosing the right tool for the job rather than contorting a solution to fit a new, siloed API.

And let’s be honest: price matters in real product development. The flat pricing model helps here too. If you’re building tools that carry memory across dozens of documents and sessions, you don’t want the bill to balloon as you scale. With access to higher token counts at predictable costs, you can forecast more accurately and iterate faster. You might still optimize with selective contexts, but you won’t feel forced into aggressive trimming just to keep the bill in check.

The limits, caveats, and the new balance

All that said, this isn’t a universal free pass for every use case. The long-context advantage tends to show up most clearly in larger-scale tasks. If your workload stays well under 200,000 tokens per prompt, the economics may still feel premium. It’s not that you’re paying more upfront — you’re just not getting a dramatic discount for small prompts. In other words, the sweet spot for the new pricing is where you’re regularly using big documents, broad conversations, or multiple memory rounds in a single session.

Latency remains a practical consideration. A bigger context window means more data to fetch, search, and rank. For real-time or near-real-time apps, that can translate into higher response times. The trick is balancing when you truly need the million-token window against when you’re fine with a smaller, faster pass. That’s where hybrid

approaches may shine — combining a fast, local retrieval pass with a longer, more thorough pass over a million-token context when needed.

RAG — retrieval augmented generation — isn’t dead; it’s evolving. Even with a large, capable context, you’ll still benefit from clever retrieval strategies: layered retrieval, more nuanced context selection, and perhaps hybrid systems that mix embedding-based search with other indexing methods. The upshot is not do more with less, but do more with smarter memory management and smarter retrieval choices. The practical implication is: design for the actual task, not the theoretical maximum context window.

The bigger takeaway you can act on

What makes this release different from the techno-talk around other million-token claims is the combination of usable room, better retrieval, and a pricing approach that doesn’t punish you for thinking bigger. It isn’t about having the biggest warehouse; it’s about being able to reach the important shelves reliably. For developers, that means you can prototype longer-running agents, embed more documents in a single run, and keep memory alive across interactions without a constant game of token Tetris. For product teams, it means potential improvements in user experience: longer sessions, more thorough summaries, and more accurate answers drawn from deeply nested sources.

If you’re evaluating whether to experiment with a long-context approach in your stack, here are a few practical steps you can take now: start with a medium-sized corpus and measure retrieval accuracy across your typical queries; test end-to-end latency with larger prompts; compare the cost curve against your current RAG strategy; and design your agent workflow to leverage both large memory windows and targeted compact prompts where speed matters most. The goal isn’t to throw everything into the biggest window — it’s to use the window you have in the most productive way possible, with a retrieval layer that actually serves the user’s needs.

In the end, long context is only as valuable as the intelligence you can pull from it. Anthropic’s approach — bigger room plus better retrieval plus friendlier pricing — is a meaningful nudge toward making long-context AI truly usable at scale. It’s not a perfect guarantee for every project, but it’s a notable step toward systems that

remember more and forget less, without bankrupting your budget in the process. And if you’re building the next generation of document-heavy apps or long-running agent workflows, that combination could be exactly what you’ve been waiting for.

So yes, the 1 Million context window is a big number. The real question is whether the system behind it can turn that number into real value in your product. Based on the current signals — robust retrieval, broader platform support, and a pricing curve that makes scale less painful — the early signs look promising. It’s not the final word on

long-context AI, but it’s a compelling chapter that makes you rethink what a memory window can and should do in the wild. As always, the best test will be your own experiments and your users’ real-world needs. Are you ready to try a bigger memory and see what sticks?

If you’ve experienced long-context frustration before, you’re not imagining things. The update is not a miracle cure, but it does tilt the odds toward it being more useful in practice. And in a world where teams juggle multiple documents, chats, and tool calls across hours of work, that difference can be the difference between a clever prototype and a reliable, scalable feature. The door is opening for longer, more coherent AI sessions — and for developers who know how to push through the initial setup to unlock genuine, tangible benefits.